Blueprint #3 – Industrial AI Platform Architecture

Smart Factory Blueprint Series

Industrial systems are rapidly evolving from data collection platforms into AI-driven intelligent systems.

In the previous blueprints we explored:

- Blueprint #1 — Industrial Data Pipeline Architecture

- Blueprint #2 — Industrial Streaming Architecture

Those architectures focused on data ingestion and real-time processing.

However, the real value of industrial data emerges when it is used to power Artificial Intelligence systems.

This blueprint explains how to design a production-grade Industrial AI Platform Architecture that enables scalable machine learning in manufacturing environments.

The Role of an Industrial AI Platform

Industrial AI platforms transform operational data into actionable intelligence.

They enable organizations to:

- train machine learning models

- deploy models into production

- perform real-time inference

- monitor model performance

- manage the entire ML lifecycle

Without a proper platform, many industrial AI initiatives fail due to:

- fragmented infrastructure

- lack of deployment pipelines

- absence of monitoring

- difficulty scaling AI solutions

A well-designed platform enables reliable and scalable industrial AI systems.

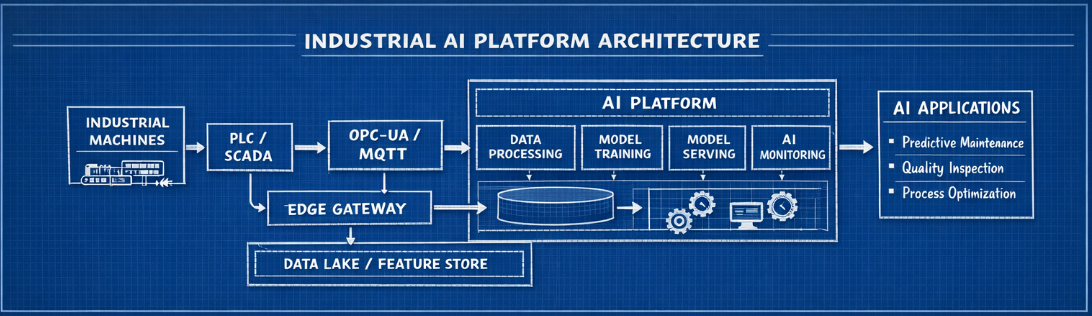

Industrial AI Architecture Overview

Industrial AI platforms sit on top of the industrial data infrastructure.

The data flow typically follows this pattern:

Industrial Machines ↓ PLC / SCADA ↓ OPC-UA / MQTT ↓ Edge Gateway ↓ Streaming Platform (Kafka) ↓ Data Lake / Feature Store ↓ AI Platform ↓ AI Applications

This layered architecture separates data ingestion, processing, training, and inference, making the system scalable and maintainable.

Core Components of the Industrial AI Platform

The Industrial AI platform consists of several critical layers.

1. Data Layer

AI systems rely heavily on large volumes of high-quality data.

Industrial data sources typically include:

- sensors

- PLC signals

- SCADA historians

- MES systems

- quality inspection systems

Typical technologies used in the data layer:

| Component | Technology |

|---|---|

| Data Lake | S3 / HDFS |

| Streaming | Kafka |

| Query Engine | Trino / Athena |

| Data Warehouse | Snowflake / Redshift |

This layer provides the historical datasets required for machine learning training.

2. Feature Engineering Layer

Raw industrial data must be transformed into meaningful features before being used for AI.

Examples of industrial features:

- rolling vibration averages

- equipment utilization metrics

- temperature gradients

- frequency spectrum components

Feature engineering pipelines are commonly built with:

- Apache Spark

- Apache Flink

- Kafka Streams

- Python pipelines

A Feature Store is often introduced to store reusable ML features.

Popular feature store technologies include:

- Feast

- Hopsworks

- Tecton

Feature stores help maintain consistency between training and inference data.

3. Model Training Platform

Machine learning training requires scalable compute infrastructure.

Typical environments include:

- Kubernetes clusters

- GPU nodes

- distributed training frameworks

Common tools include:

| Component | Tool |

|---|---|

| Training framework | PyTorch / TensorFlow |

| Experiment tracking | MLflow |

| Pipeline orchestration | Kubeflow / Airflow |

| Data versioning | DVC / LakeFS |

These tools help manage experiments and ensure reproducibility.

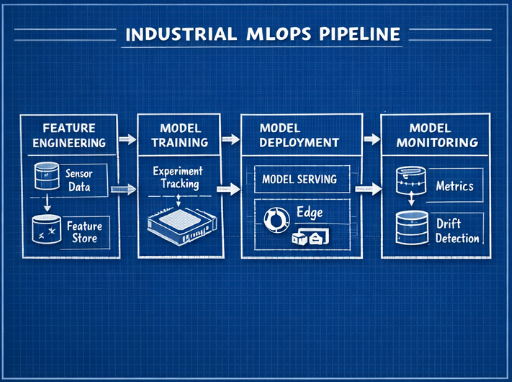

Industrial MLOps Pipeline

Industrial MLOps pipelines typically include:

- Data ingestion

- feature engineering

- model training

- model validation

- model deployment

- model monitoring

MLOps ensures that models can be continuously improved and reliably deployed.

4. Model Serving Layer

Once models are trained, they must be deployed to perform inference.

Industrial environments require several inference modes:

- real-time inference

- batch inference

- edge inference

Common serving technologies:

| Use Case | Technology |

|---|---|

| Online inference | KServe |

| Model deployment | Seldon |

| API serving | FastAPI |

| GPU inference | NVIDIA Triton |

Low latency is critical for many industrial applications.

5. AI Monitoring & Observability

AI models degrade over time due to:

- data drift

- concept drift

- process changes

- equipment replacement

Monitoring systems track:

- model accuracy

- feature drift

- prediction distributions

- inference latency

Typical monitoring tools include:

| Monitoring Type | Tool |

|---|---|

| Metrics | Prometheus |

| Visualization | Grafana |

| ML monitoring | Evidently AI |

| Logging | ELK Stack |

Without monitoring, AI models may silently degrade in production.

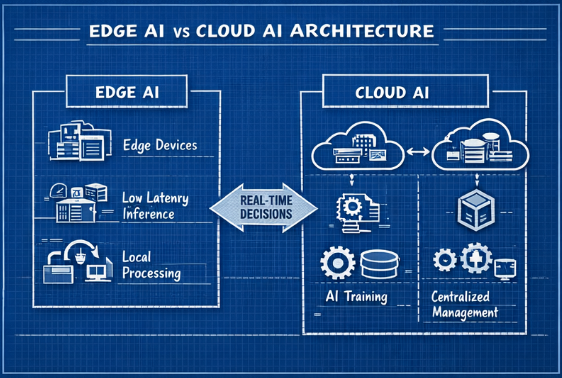

Industrial AI Deployment Patterns

Industrial AI systems are deployed in several architectural patterns.

Edge AI

Edge AI executes models directly on the factory floor.

Advantages include:

- low latency

- offline operation

- reduced cloud bandwidth usage

Typical edge hardware:

- NVIDIA Jetson

- industrial PCs

- edge Kubernetes clusters (K3s)

Common use cases:

- visual inspection

- anomaly detection

- robotics control

Cloud AI

Cloud-based AI platforms provide centralized infrastructure for model training and large-scale analytics.

Advantages include:

- massive compute resources

- centralized management

- simplified MLOps pipelines

Typical use cases:

- predictive maintenance

- fleet analytics

- process optimization

Edge vs Cloud AI Architecture

Most factories implement a hybrid architecture:

Edge AI → Real-time inference Cloud AI → model training and optimization

This architecture balances latency, scalability, and operational efficiency.

Example Industrial AI Use Cases

Predictive Maintenance

Sensors monitor machine health metrics such as:

- vibration

- temperature

- pressure

AI models can predict failures including:

- bearing degradation

- motor faults

- abnormal operating conditions

Visual Quality Inspection

Computer vision systems detect manufacturing defects including:

- scratches

- surface contamination

- assembly errors

Common technologies include:

- YOLO

- ResNet

- Vision Transformers

Process Optimization

AI models can optimize production processes by improving:

- yield

- machine parameters

- energy efficiency

Common optimization methods include:

- reinforcement learning

- Bayesian optimization

Security Considerations

Industrial AI platforms must be secured against cyber threats.

Key security practices include:

- secure data ingestion pipelines

- encrypted model artifacts

- role-based access control

- audit logging

Additionally, IT and OT networks must remain properly segmented.

Design Considerations for Industrial AI Platforms

Several architectural challenges must be considered.

Data Quality

Industrial sensor data is often noisy or incomplete.

Robust preprocessing pipelines are essential.

Real-Time Requirements

Some AI use cases require millisecond latency.

This often requires edge AI deployment.

Model Lifecycle Management

AI platforms must support:

- model versioning

- rollback capabilities

- reproducible training pipelines

OT System Integration

AI outputs often need to integrate with:

- SCADA

- MES

- control systems

Careful validation is required before connecting AI outputs to control loops.

Conclusion

Industrial AI platforms enable factories to move beyond simple data collection toward data-driven operations.

By combining:

- industrial data pipelines

- real-time streaming architectures

- scalable AI infrastructure

- mature MLOps practices

manufacturers can deploy reliable AI systems at scale.

The biggest challenge is not building machine learning models —

it is building the platform that runs them reliably in production.

Next Blueprint

The next article in this series will explore:

➡ Blueprint #4 — Industrial Computer Vision Architecture

This blueprint will explain how factories deploy large-scale AI vision inspection systems for automated quality control.