Blueprint #4 -- Industrial Computer Vision Architecture

Blueprint #4 - Industrial Computer Vision Architecture

Modern smart factories increasingly rely on AI-based computer vision for quality inspection, safety monitoring, and production analytics.

Traditional machine vision systems were rule-based and rigid. Today’s factories deploy deep learning models running on edge GPUs capable of detecting defects, anomalies, and operational risks in real time.

This blueprint explains how to design a scalable Industrial Computer Vision architecture integrating:

- Edge AI inference

- Real-time video streaming

- Model lifecycle management

- Industrial data pipelines

Industrial Computer Vision Challenges

Massive Video Data

Source Data Rate —————— ————- 4K Camera 10–20 Mbps Multiple Cameras 100+ Mbps 24/7 Operation TBs per day

Sending raw video to the cloud is not practical for most factories.

Low Latency Requirements

Inspection decisions must occur in milliseconds.

Examples include:

- PCB defect detection

- Bottle fill level inspection

- Surface scratch detection

These workloads require edge inference close to the production line.

Harsh Industrial Environments

Factories introduce additional constraints:

- unstable lighting

- vibration

- dust

- legacy PLC integration

- isolated industrial networks

Architectures must therefore be resilient and autonomous.

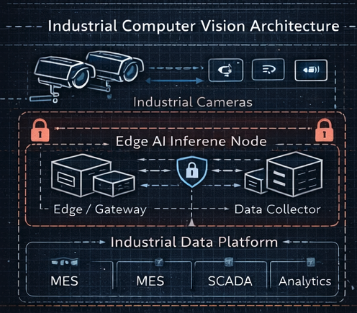

Industrial Computer Vision Architecture

The recommended architecture separates workloads into four layers:

- Vision Edge Layer

- Edge AI Processing

- Industrial Data Platform

- AI Model Lifecycle

Architecture Overview

Vision Edge Layer

Industrial cameras generate continuous video streams.

Typical devices include:

- GigE industrial cameras

- USB3 vision cameras

- smart cameras

Protocols:

- RTSP

- GigE Vision

- USB Vision

These streams are processed by edge AI inference nodes.

Edge AI Processing

Edge servers run GPU‑accelerated inference pipelines.

Component Technology —————— ——————————– Edge compute NVIDIA Jetson / Industrial GPU Inference engine TensorRT Vision models YOLO / Detectron2 Video pipeline GStreamer Runtime Docker

Typical pipeline:

Camera → Video Capture → Pre‑Processing → AI Inference → Detection → Event Generation

Only metadata and results are sent upstream.

Industrial Data Platform

Vision results integrate with the broader factory data platform.

System Purpose ———– ———————- Kafka Event streaming Data Lake Image storage MES Quality inspection SCADA Alarm monitoring BI tools Production analytics

Example event:

1

2

3

4

5

6

{

"camera_id": "line3_cam2",

"timestamp": "2026-03-15T10:21:00",

"defect_type": "surface_scratch",

"confidence": 0.94

}

AI Model Lifecycle

Industrial AI systems require continuous improvement.

Lifecycle steps:

- Data Collection

- Annotation

- Model Training

- Evaluation

- Deployment to Edge

Common tools:

Component Example ——————— —————————- Dataset storage S3 / Data Lake Annotation CVAT Training PyTorch Experiment tracking MLflow Deployment Kubernetes / Edge registry

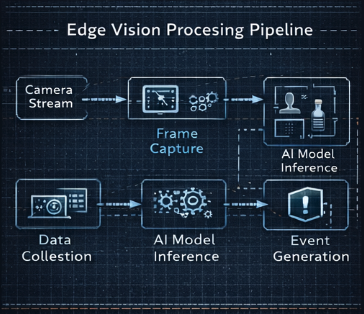

Edge Vision Processing Pipeline

Frame Capture

Frames are captured at:

- 30–60 FPS

- high resolution

Using tools such as:

- OpenCV

- GStreamer

Pre‑Processing

Typical steps:

- resizing

- normalization

- cropping

- color conversion

AI Inference

Task Example —————— ——————– Object detection missing components Classification defect types Segmentation surface anomalies Pose estimation robot inspection

Popular models:

- YOLOv8

- EfficientDet

- Mask R‑CNN

Event Generation

Inference results become factory events.

Examples:

1

2

3

4

defect_detected

worker_without_helmet

missing_component

product_orientation_error

Events are typically streamed through Kafka or MQTT.

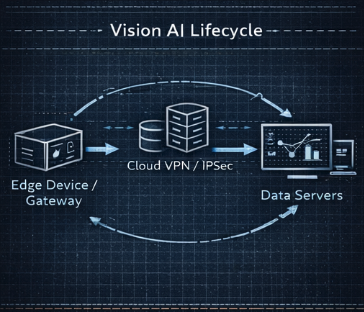

Industrial Vision AI Lifecycle

Data Collection

Edge nodes store:

- defect images

- false positives

- rare edge cases

These datasets are used for model retraining.

Data Annotation

Common tools:

- CVAT

- Label Studio

- Supervisely

Model Training

Training normally runs on centralized GPU clusters.

Stack example:

1

2

3

4

PyTorch

CUDA

Distributed training

Data augmentation

Continuous Deployment

Validated models are deployed through:

- container registry

- OTA updates

- edge orchestration

Edge nodes automatically pull the latest version.

Security Considerations

Important controls:

- network isolation

- signed AI models

- secure boot for edge devices

- encrypted storage

- remote attestation

Scalability Strategy

Factories may deploy hundreds of cameras.

Strategies:

- horizontal edge scaling

- distributed event streaming with Kafka

- centralized model registry

Key Design Principles

Successful industrial computer vision platforms follow several principles:

Edge‑First Processing

Perform inference close to machines.

Event‑Driven Architecture

Transmit only results instead of full video streams.

Continuous Learning

Models evolve with production changes.

Industrial Integration

Vision systems integrate with MES, SCADA, and ERP.

Smart Factory Blueprint Series

- Blueprint #1 – Industrial Data Pipeline Architecture

- Blueprint #2 – Industrial Streaming Architecture

- Blueprint #3 – Industrial AI Platform Architecture

- Blueprint #4 – Industrial Computer Vision Architecture

- Blueprint #5 – Industrial Digital Twin Architecture

- Blueprint #6 – Industrial Edge Computing Architecture

Image files expected in:

/assets/img/blueprints/

Required images:

blueprint4-computer-vision-architecture.png blueprint4-edge-vision-pipeline.png blueprint4-vision-ai-lifecycle.png